Coursera Lets Instructors A/B Test Their Courses, Experiments With Automated Coaching

Coursera recently shared details about some of its platform features, namely Automated Coaching and A/B Testing.

In a recent post on Coursera’s engineering blog, the company shared details on its A/B testing platform for course content.

A/B testing or split testing is used to determine which version performs better and is used extensively in testing web pages. Here is how Optimizely, a service that lets you run A/B tests defines it:

AB testing is essentially an experiment where two or more variants of a page are shown to users at random, and statistical analysis is used to determine which variation performs better for a given conversion goal.

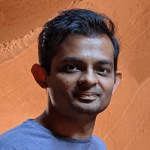

Coursera’s platform now allows researches and instructors to A/B test course content. Students are randomly enrolled in different versions of the same course, and these versions are compared by tracking metrics such as course completions.

src: Coursera Engineering blog

Here are a few example tests taken directly from Coursera’s blog:

1. Chris Brooks, from the University of Michigan, wants to know: Do subtle gender cues impact female learners in STEM content? He randomized the appearance of male versus female workers in the background of some videos, and found that this had an impact on engagement with the material presented, but not on downstream retention or course completion.

2. Rene Kizilcec, from Stanford, is interested in the question: Can we mitigate a social identity threat (when people feel too estranged from the social context of their learning environment to relax and learn)? He randomized whether learners received focused interventions, for example whether they were asked their goals at the beginning of the course and then reminded throughout. His results suggest that mitigating social identity threat can reduce the global achievement gap.

According to Coursera, currently, more than a dozen such experiments are running today.

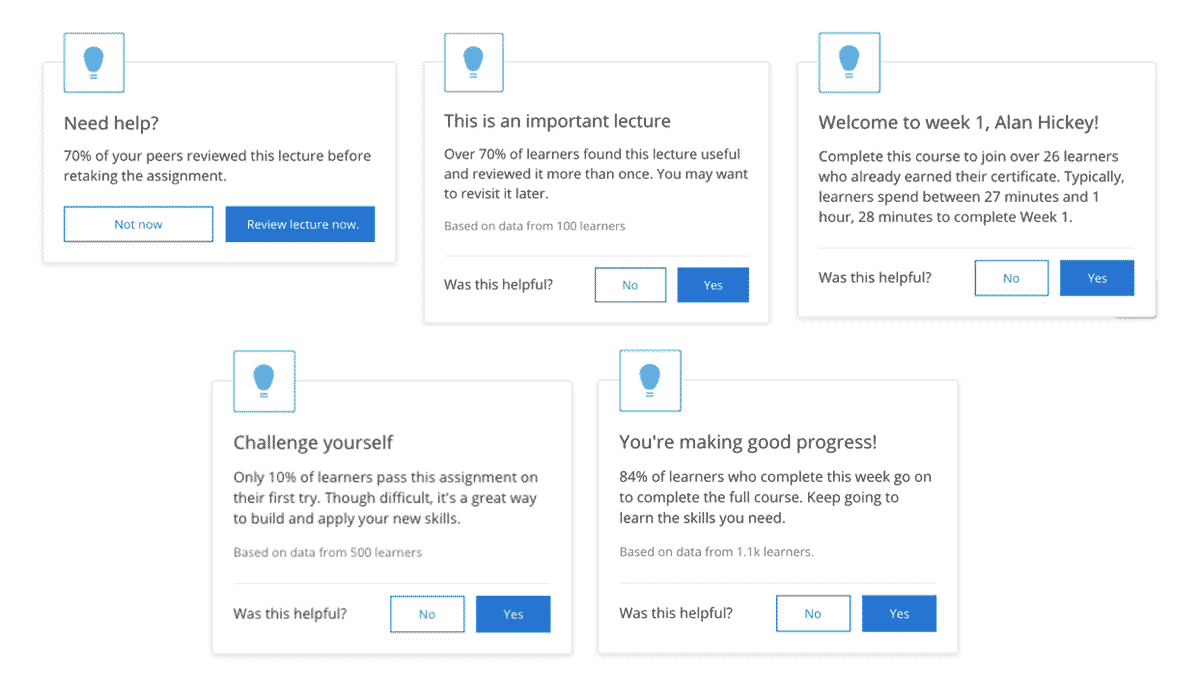

In another post on the engineering blog, Coursera also shared details on what it calls “Automated Coaching”. Learners going through a course receive different kinds of pop-up messages, or interventions, as Coursera calls it. For this, the company is leveraging its large dataset to build machine learning models, that help deliver these messages at the appropriate time. A few examples are shown below.

Coursera has categorized these interventions into three different categories, and each category increases the number of course items learners complete by more than 2%.

Further Reading:

How A/B Testing Powers Pedagogy on Coursera – Coursera Engineering – Medium

Automated coaching – Coursera Engineering – Medium

Tags